< User:Crantila | FSC

m (changed location of images) |

(revised to incorporate Rlandmann's comments (sent by email); added DocBook address; hid addresses) |

||

| Line 1: | Line 1: | ||

These terms are used in many different audio contexts. Understanding them is important to knowing how to operate audio equipment in general, whether computer-based or not. | These terms are used in many different audio contexts. Understanding them is important to knowing how to operate audio equipment in general, whether computer-based or not. | ||

<!-- | |||

Address: User:Crantila/FSC/Audio_Vocabulary | Address: User:Crantila/FSC/Audio_Vocabulary | ||

DocBook: "Audio_Vocabulary.xml" | |||

--> | |||

=== MIDI Sequencer === | === MIDI Sequencer === | ||

A '''sequencer''' is a device or software | A '''sequencer''' is a device or software program that produces signals that a synthesizer turns into sound. You can also use a sequencer to arrange MIDI signals into music. The Musicians' Guide covers two digital audio workstations (DAWs) that are primarily MIDI sequencers, Qtractor and Rosegarden. All three DAWs in this guide use MIDI signals to control other devices or effects. | ||

=== Busses, Master Bus, and Sub-master Bus === | === Busses, Master Bus, and Sub-master Bus === | ||

| Line 12: | Line 13: | ||

[[File:FMG-master_sub_bus.png|200px|The relationship between the master bus and sub-master busses.]] | [[File:FMG-master_sub_bus.png|200px|The relationship between the master bus and sub-master busses.]] | ||

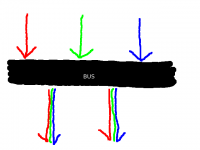

An '''audio bus''' | An '''audio bus''' sends audio signals from one place to another. Many different signals can be inputted to a bus simultaneously, and many different devices or applications can read from a bus simultaneously. Signals inputted to a bus are mixed together, and cannot be separated after entering a bus. All devices or applications reading from a bus receive the same signal. | ||

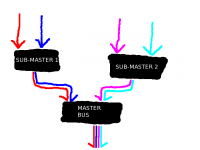

All audio routed out of a program passes through the master bus. The '''master bus''' combines all audio tracks, allowing for final level adjustments and simpler mastering. The primary purpose of the master bus is to mix all of the tracks into two channels. | |||

A '''sub-master bus''' combines audio signals before they reach the master bus. Using a sub-master bus is optional. They allow you to adjust more than one track in the same way, without affecting all the tracks. | |||

Audio busses are also used to send audio into effects processors. | |||

=== Level (Volume/Loudness) === | === Level (Volume/Loudness) === | ||

The perceived '''volume''' or '''loudness''' of | The perceived '''volume''' or '''loudness''' of sound is a complex phenomenon, not entirely understood by experts. One widely-agreed method of assessing loudness is by measuring the sound pressure level (SPL), which is measured in decibels (dB) or bels (B, equal to ten decibels). In audio production communities, this is called "level." The '''level''' of an audio signal is one way of measuring the signal's perceived loudness. The level is part of the information stored in an audio file. | ||

There are many different ways to monitor and adjust the level of an audio signal, and there is no widely-agreed practice. One reason for this situation is the technical limitations of recorded audio. Most level meters are designed so that the average level is -6 dB on the meter, and the maximum level is 0 dB. This practice was developed for analog audio. We recommend using an external meter and the "K-system," described in a link below. The K-system for level metering was developed for digital audio. | |||

In the Musicians' Guide, this term is called "volume level," to avoid confusion with other levels, or with perceived volume or loudness. | |||

For more information, refer to these web pages: | For more information, refer to these web pages: | ||

| Line 39: | Line 42: | ||

<!-- [[File:FMG-Balance_and_Panning.xcf]] --> | <!-- [[File:FMG-Balance_and_Panning.xcf]] --> | ||

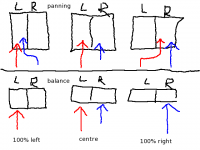

'''Panning''' | '''Panning''' adjusts the portion of a channel's signal that is sent to each output channel. In a stereophonic (two-channel) setup, the two channels represent the "left" and the "right" speakers. Two channels of recorded audio are available in the DAW, and the default setup sends all of the "left" recorded channel to the "left" output channel, and all of the "right" recorded channel to the "right" output channel. Panning sends some of the left recorded channel's level to the right output channel, or some of the right recorded channel's level to the left output channel. Each recorded channel has a constant total output level, which is divided between the two output channels. | ||

The default setup for a left recorded channel is for "full left" panning, meaning that 100% of the output level is output to the left output channel. An audio engineer might adjust this so that 80% of the recorded channel's level is output to the left output channel, and 20% of the level is output to the right output channel. An audio engineer might make the left recorded channel sound like it is in front of the listener by setting the panner to "center," meaning that 50% of the output level is output to both the left and right output channels. | |||

Balance | Balance is sometimes confused with panning, even on commercially-available audio equipment. Adjusting the '''balance''' changes the volume level of the output channels, without redirecting the recorded signal. The default setting for balance is "center," meaning 0% change to the volume level. As you adjust the dial from "center" toward the "full left" setting, the volume level of the right output channel is decreased, and the volume level of the left output channel remains constant. As you adjust the dial from "center" toward the "full right" setting, the volume level of the left output channel is decreased, and the volume level of the right output channel remains constant. If you set the dial to "20% left," the audio equipment would reduce the volume level of the right output channel by 20%, increasing the perceived loudness of the left output channel by approximately 20%. | ||

You should adjust the balance so that you perceive both speakers as equally loud. Balance compensates for poorly set up listening environments, where the speakers are not equal distances from the listener. If the left speaker is closer to you than the right speaker, you can adjust the balance to the right, which decreases the volume level of the left speaker. This is not an ideal solution, but sometimes it is impossible or impractical to set up your speakers correctly. You should adjust the balance only at final playback. | |||

=== Time, Timeline and Time-Shifting === | === Time, Timeline and Time-Shifting === | ||

There are many ways to measure musical time. The four most popular scales for digital audio are: | There are many ways to measure musical time. The four most popular time scales for digital audio are: | ||

* Bars and Beats: Usually used for MIDI work, and called "BBT," meaning "Bars, Beats, and Ticks." A tick is a partial beat. | * Bars and Beats: Usually used for MIDI work, and called "BBT," meaning "Bars, Beats, and Ticks." A tick is a partial beat. | ||

* Minutes and Seconds: Usually used for audio work. | * Minutes and Seconds: Usually used for audio work. | ||

| Line 54: | Line 57: | ||

* Samples: Relating directly to the format of the underlying audio file, a sample is the shortest possible length of time in an audio file. See [[User:Crantila/FSC/Sound_Cards#Sample_Rate|this section]] for more information on samples. | * Samples: Relating directly to the format of the underlying audio file, a sample is the shortest possible length of time in an audio file. See [[User:Crantila/FSC/Sound_Cards#Sample_Rate|this section]] for more information on samples. | ||

Most audio software, particularly | Most audio software, particularly digital audio workstations (DAWs), allow the user to choose which scale they prefer. DAWs use a '''timeline''' to display the progression of time in a session, allowing you to do '''time-shifting'''; that is, adjust the time in the timeline when a region starts to be played. | ||

Time is | Time is represented horizontally, where the leftmost point is the beginning of the session (zero, regardless of the unit of measurement), and the rightmost point is some distance after the end of the session. | ||

=== Synchronization === | === Synchronization === | ||

'''Synchronization''' is | '''Synchronization''' is synchronizing the operation of multiple tools, frequently the movement of the transport. Synchronization also controls automation across applications and devices. MIDI signals are usually used for synchronization. | ||

=== Routing and Multiplexing === | === Routing and Multiplexing === | ||

| Line 65: | Line 68: | ||

<!-- [[FMG-routing_and_multiplexing.xcf]] --> | <!-- [[FMG-routing_and_multiplexing.xcf]] --> | ||

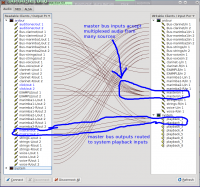

'''Routing''' audio | '''Routing''' audio transmits a signal from one place to another - between applications, between parts of applications, or between devices. On Linux systems, the JACK Audio Connection Kit is used for audio routing. JACK-aware applications (and PulseAudio ones, if so configured) provide inputs and outputs to the JACK server, depending on their configuration. The QjackCtl application can adjust the default connections. You can easily reroute the output of a program like FluidSynth so that it can be recorded by Ardour, for example, by using QjackCtl. | ||

'''Multiplexing''' | '''Multiplexing''' allows you to connect multiple devices and applications to a single input or output. QjackCtl allows you to easily perform multiplexing. This may not seem important, but remember that only one connection is possible with a physical device like an audio interface. Before computers were used for music production, multiplexing required physical devices to split or combine the signals. | ||

=== Multichannel Audio === | === Multichannel Audio === | ||

An '''audio channel''' is a single path | An '''audio channel''' is a single path of audio data. '''Multichannel audio''' is any audio which uses more than one channel simultaneously, allowing the transmission of more audio data than single-channel audio. | ||

Audio was originally recorded with only one channel, producing "monophonic," or "mono" recordings. Beginning in the 1950s, stereophonic recordings, with two independent channels, | Audio was originally recorded with only one channel, producing "monophonic," or "mono" recordings. Beginning in the 1950s, stereophonic recordings, with two independent channels, began replacing monophonic recordings. Since humans have two independent ears, it makes sense to record and reproduce audio with two independent channels, involving two speakers. Most sound recordings available today are stereophonic, and people have found this mostly satisfying. | ||

There is a growing trend | There is a growing trend toward five- and seven-channel audio, driven primarily by "surround-sound" movies, and not widely available for music. Two "surround-sound" formats exist for music: DVD Audio (DVD-A) and Super Audio CD (SACD). The development of these formats, and the devices to use them, is held back by the proliferation of headphones with personal MP3 players, a general lack of desire for improvement in audio quality amongst consumers, and the copy-protection measures put in place by record labels. The result is that, while some consumers are willing to pay higher prices for DVD-A or SACD recordings, only a small number of recordings are available. Even if you buy a DVD-A or SACD-capable player, you would need to replace all of your audio equipment with models that support proprietary copy-protection software. Without this equipment, the player is often forbidden from outputting audio with a higher sample rate or sample format than a conventional audio CD. None of these factors, unfortunately, seem like they will change in the near future. | ||

Latest revision as of 05:55, 2 August 2010

These terms are used in many different audio contexts. Understanding them is important to knowing how to operate audio equipment in general, whether computer-based or not.

MIDI Sequencer

A sequencer is a device or software program that produces signals that a synthesizer turns into sound. You can also use a sequencer to arrange MIDI signals into music. The Musicians' Guide covers two digital audio workstations (DAWs) that are primarily MIDI sequencers, Qtractor and Rosegarden. All three DAWs in this guide use MIDI signals to control other devices or effects.

Busses, Master Bus, and Sub-master Bus

An audio bus sends audio signals from one place to another. Many different signals can be inputted to a bus simultaneously, and many different devices or applications can read from a bus simultaneously. Signals inputted to a bus are mixed together, and cannot be separated after entering a bus. All devices or applications reading from a bus receive the same signal.

All audio routed out of a program passes through the master bus. The master bus combines all audio tracks, allowing for final level adjustments and simpler mastering. The primary purpose of the master bus is to mix all of the tracks into two channels.

A sub-master bus combines audio signals before they reach the master bus. Using a sub-master bus is optional. They allow you to adjust more than one track in the same way, without affecting all the tracks.

Audio busses are also used to send audio into effects processors.

Level (Volume/Loudness)

The perceived volume or loudness of sound is a complex phenomenon, not entirely understood by experts. One widely-agreed method of assessing loudness is by measuring the sound pressure level (SPL), which is measured in decibels (dB) or bels (B, equal to ten decibels). In audio production communities, this is called "level." The level of an audio signal is one way of measuring the signal's perceived loudness. The level is part of the information stored in an audio file.

There are many different ways to monitor and adjust the level of an audio signal, and there is no widely-agreed practice. One reason for this situation is the technical limitations of recorded audio. Most level meters are designed so that the average level is -6 dB on the meter, and the maximum level is 0 dB. This practice was developed for analog audio. We recommend using an external meter and the "K-system," described in a link below. The K-system for level metering was developed for digital audio.

In the Musicians' Guide, this term is called "volume level," to avoid confusion with other levels, or with perceived volume or loudness.

For more information, refer to these web pages:

- "Level Practices" (the type of meter described here is available in the "jkmeter" package from Planet CCRMA at Home).

- "K-system"

- "Headroom"

- "Equal-loudness contour"

- "Sound level meter"

- "Listener fatigue"

- "Dynamic range compression"

- "Alignment level"

Panning and Balance

Panning adjusts the portion of a channel's signal that is sent to each output channel. In a stereophonic (two-channel) setup, the two channels represent the "left" and the "right" speakers. Two channels of recorded audio are available in the DAW, and the default setup sends all of the "left" recorded channel to the "left" output channel, and all of the "right" recorded channel to the "right" output channel. Panning sends some of the left recorded channel's level to the right output channel, or some of the right recorded channel's level to the left output channel. Each recorded channel has a constant total output level, which is divided between the two output channels.

The default setup for a left recorded channel is for "full left" panning, meaning that 100% of the output level is output to the left output channel. An audio engineer might adjust this so that 80% of the recorded channel's level is output to the left output channel, and 20% of the level is output to the right output channel. An audio engineer might make the left recorded channel sound like it is in front of the listener by setting the panner to "center," meaning that 50% of the output level is output to both the left and right output channels.

Balance is sometimes confused with panning, even on commercially-available audio equipment. Adjusting the balance changes the volume level of the output channels, without redirecting the recorded signal. The default setting for balance is "center," meaning 0% change to the volume level. As you adjust the dial from "center" toward the "full left" setting, the volume level of the right output channel is decreased, and the volume level of the left output channel remains constant. As you adjust the dial from "center" toward the "full right" setting, the volume level of the left output channel is decreased, and the volume level of the right output channel remains constant. If you set the dial to "20% left," the audio equipment would reduce the volume level of the right output channel by 20%, increasing the perceived loudness of the left output channel by approximately 20%.

You should adjust the balance so that you perceive both speakers as equally loud. Balance compensates for poorly set up listening environments, where the speakers are not equal distances from the listener. If the left speaker is closer to you than the right speaker, you can adjust the balance to the right, which decreases the volume level of the left speaker. This is not an ideal solution, but sometimes it is impossible or impractical to set up your speakers correctly. You should adjust the balance only at final playback.

Time, Timeline and Time-Shifting

There are many ways to measure musical time. The four most popular time scales for digital audio are:

- Bars and Beats: Usually used for MIDI work, and called "BBT," meaning "Bars, Beats, and Ticks." A tick is a partial beat.

- Minutes and Seconds: Usually used for audio work.

- SMPTE Timecode: Invented for high-precision coordination of audio and video, but can be used with audio alone.

- Samples: Relating directly to the format of the underlying audio file, a sample is the shortest possible length of time in an audio file. See this section for more information on samples.

Most audio software, particularly digital audio workstations (DAWs), allow the user to choose which scale they prefer. DAWs use a timeline to display the progression of time in a session, allowing you to do time-shifting; that is, adjust the time in the timeline when a region starts to be played.

Time is represented horizontally, where the leftmost point is the beginning of the session (zero, regardless of the unit of measurement), and the rightmost point is some distance after the end of the session.

Synchronization

Synchronization is synchronizing the operation of multiple tools, frequently the movement of the transport. Synchronization also controls automation across applications and devices. MIDI signals are usually used for synchronization.

Routing and Multiplexing

Routing audio transmits a signal from one place to another - between applications, between parts of applications, or between devices. On Linux systems, the JACK Audio Connection Kit is used for audio routing. JACK-aware applications (and PulseAudio ones, if so configured) provide inputs and outputs to the JACK server, depending on their configuration. The QjackCtl application can adjust the default connections. You can easily reroute the output of a program like FluidSynth so that it can be recorded by Ardour, for example, by using QjackCtl.

Multiplexing allows you to connect multiple devices and applications to a single input or output. QjackCtl allows you to easily perform multiplexing. This may not seem important, but remember that only one connection is possible with a physical device like an audio interface. Before computers were used for music production, multiplexing required physical devices to split or combine the signals.

Multichannel Audio

An audio channel is a single path of audio data. Multichannel audio is any audio which uses more than one channel simultaneously, allowing the transmission of more audio data than single-channel audio.

Audio was originally recorded with only one channel, producing "monophonic," or "mono" recordings. Beginning in the 1950s, stereophonic recordings, with two independent channels, began replacing monophonic recordings. Since humans have two independent ears, it makes sense to record and reproduce audio with two independent channels, involving two speakers. Most sound recordings available today are stereophonic, and people have found this mostly satisfying.

There is a growing trend toward five- and seven-channel audio, driven primarily by "surround-sound" movies, and not widely available for music. Two "surround-sound" formats exist for music: DVD Audio (DVD-A) and Super Audio CD (SACD). The development of these formats, and the devices to use them, is held back by the proliferation of headphones with personal MP3 players, a general lack of desire for improvement in audio quality amongst consumers, and the copy-protection measures put in place by record labels. The result is that, while some consumers are willing to pay higher prices for DVD-A or SACD recordings, only a small number of recordings are available. Even if you buy a DVD-A or SACD-capable player, you would need to replace all of your audio equipment with models that support proprietary copy-protection software. Without this equipment, the player is often forbidden from outputting audio with a higher sample rate or sample format than a conventional audio CD. None of these factors, unfortunately, seem like they will change in the near future.